Systematic Literature Review and Meta-Analysis - Overcoming Selection Bias

https://www.humantruth.info/systematic_literature_review.html

By Vexen Crabtree 2017

#science #scientific_method #selection_bias #thinking_errors

To prevent errors it is best to conduct a methodical approach in evaluating evidence. In the scientific method this is called Systematic Review or Literature Review. The combining of statistical data from experiments into a single data-set is called a meta-analysis, a process which makes statistical analysis more accurate1,2. Specific search criteria are laid out before any evidence is sought and each source of information is methodically judged according to pre-set criteria before looking at the results. This eliminates, as much as possible, the human bias whereby we subconsciously find ways of dismissing evidence we don't agree with. Once we've done that, then we tabulate the results of each source, and it is revealed to us which sources confirm or disconfirm our idea. The Cochrane Collaboration does this kind of research on healthcare subjects and as a result of its gold-standard methodical approach they have "saved more lives than you can possibly imagine"3. Systematic Literature Review is thus one of the most important checks-and-balances of the scientific method.

1. Selection Bias and Confirmation Bias

“

“It is the peculiar and perpetual error of the human understanding to be more moved and excited by affirmatives than negatives.”

Francis Bacon (1620)4

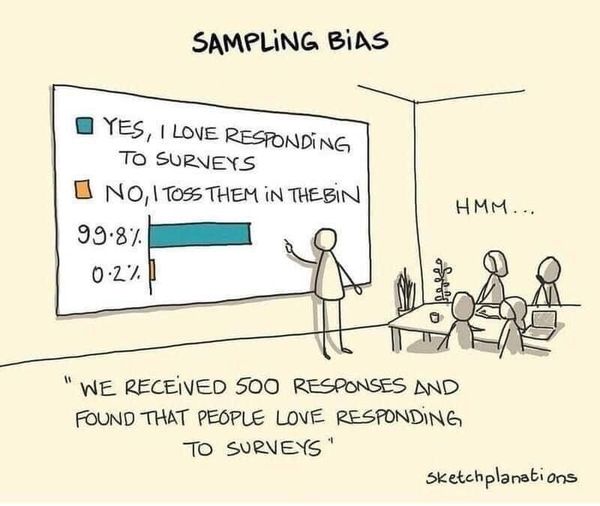

Selection Bias is the result of poor sampling techniques5 whereby we use partial and skewed data in order to back up our beliefs. It includes confirmation bias which is the unfortunate way in which us Humans tend to notice evidence that supports our ideas and we tend to ignore evidence that contradictions it6,7 and actively seek out flaws in evidence that contradicts our opinion (but automatically accept evidence that supports us)8,9. We are all pretty hard-working when it comes to finding arguments that support us, and when we find opposing views, we work hard to discredit them. But we don't work hard to discredit those who agree with us. In other words, we work at undermining sources of information that sit uncomfortably with what we believe, and we idly accept those who agree with us.

“If we believe a correlation exists, we notice and remember confirming instances. If we believe that premonitions correlate with events, we notice and remember the joint occurrence of the premonition and the event's later occurrence. We seldom notice or remember all the times unusual events do not coincide. If, after we think about a friend, the friend calls us, we notice and remember the coincidence. We don't notice all the times we think of a friend without any ensuing call, or receive a call from a friend about whom we've not been thinking.

People see not only what they expect, but correlations they want to see. This intense human desire to find order, even in random events, leads us to seek reasons for unusual happenings or mystifying mood fluctuations. By attributing events to a cause, we order our worlds and make things seem more predictable and controllable. Again, this tendency is normally adaptive but occasionally leads us astray.”

"Social Psychology" by David Myers (1999)6

Our brains are good at jumping to conclusions, and it is often hard to resist the urge. We often feel clever, even while deluding ourselves! If a bus is late twice on a row on Tuesdays whilst we are stood there waiting for it, we try to work out the cause. We would do much better to accurately note on how many other days it is late, and note how many times it isn't late on a Tuesday. But rare is the person who engages methodically in such trivial investigations. We normally just go with the flow, and think we have arrived at sensible conclusions based on the data we happen to have observed in our own little bubble of life.

All of this is predictable enough for an individual, but another form of Selection Bias occurs in mass media publications too. Cheap tabloid newspapers publish every report that shows foreigners in a bad light, or shows that crime is bad, or that something-or-other is eroding proper morality. And they mostly avoid any reports that say the opposite, because such assuring results do not sell as well. Newspapers as a whole simply never report anything boring - therefore they constantly support world-views that are divorced from everyday reality. Sometimes commercial interests skew the evidence that we are exposed to - drugs companies conduct many pseudo-scientific trials of their products but their choice of what to publish is manipulative - studies in 2001 and 2002 shows that "those with positive outcomes were nearly five times as likely to be published as those that were negative"10. Interested companies just have to keep paying for studies to be done, waiting for a misleading positive result, and then publish it and make it the basis of an advertising campaign. The public have few resources to overcome this kind of orchestrated Selection Bias.

RationalWiki uses mentions that "a website devoted to preventing harassment of women unsurprisingly concluded that nearly all women were victims of harassment at some point"11. And another example - a statistic that is most famous for being wrong, is that one in ten males is homosexual. This was based on a poll done on the community surrounding a gay-friendly publication - the sample of respondents was skewed away from the true average, hence, misleading data was observed.

The only solution for these problems is the proper and balanced statistical analysis, after actively and methodically gathering data. Alongside raising awareness of Selection Bias, Confirmation Bias, and other thinking errors. This level of critical thinking is quite a rare endeavour in anyone's personal life - we don't have time, the inclination, the confidence, or the skill, to properly evaluate subjective data. Unfortunately fact-checking is also rare in mass media publications. Personal opinions and news outlets ought to be given little trust.”

"Selection Bias and Confirmation Bias: 1. Poor Sampling Techniques" by Vexen Crabtree (2017)

2. Individual Efforts

It is very rare to find an individual who manages to critically analyze a high portion of their own beliefs. A simple piece of advice is to write down possible doubts and see if you can convince yourself to seriously investigate their truth. Charles Darwin engaged in such an endeavour:

“[I] followed a golden rule, whenever a new observation or thought came across me, which was opposed to my general results, to make a memorandum of it without fail and at once; for I had found by experience that such facts and thoughts were far more apt to escape from the memory than favourable ones.”

Charles Darwin

In Goldacre (2008)12

Another common technique that we all employ is to sometimes ask our friends and colleagues for advice. In a friendly and open atmosphere, we are less likely to be able to defend unfounded beliefs. The disadvantage is that our intellectual opponents in this arena are just as likely to also fall foul of uncontrolled thinking errors. Qualified research, conducted by professionals, is much more likely to lead to more sensible conclusions.

3. Formal Methods

3.1. Literature Review

“A literature review can be an informative, critical, and useful synthesis of a particular topic. It can identify what is known (and unknown) in the subject area, identify areas of controversy or debate, and help formulate questions that need further research. [...] A good review is characterized by the author's efforts to evaluate and critically analyze the relevant work in the field. Published reviews can be invaluable, because they collect and disseminate evidence from diverse sources and disciplines to inform professional practice on a particular topic.”

"Writing an Effective Literature Review" by Amanda Bolderston (2008)

3.2. Meta-Analysis

“Reviewers can be highly selective [and] interpret results with their own theoretical focus [and overlook] oddities. Meta-Analysis is a relatively recent approach to this problem, employing a set of statistical techniques in order to use the results of possibly hundreds of studies of the same or similar hypotheses and constructs. Studies are thoroughly reviewed and sorted as to the suitability or their hypotheses and methods... the collated set of acceptable results forms a new 'data set' [combined] in a single study.”

"Research Methods and Statistics in Psychology" by Hugh Coolican (2004)1

“A meta-analysis is a study that lumps together the data from several independent studies and does a statistical analysis on the data as if they were collected in a single, large study. One of the major problems with meta-studies is that researchers must be selective in choosing which studies to include in their analysis. Some studies will have to be rejected because they are fatally flawed: they're too small, use no controls, didn't randomize the assignment of subjects, or the like.”

"Unnatural Acts: Critical Thinking, Skepticism, and Science Exposed!" by Robert Todd Carroll (2011)2

This last problem re-introduces selection bias, and so, a systematic search pattern should be used, as per the next formal method.

3.3. Systematic Review

“That solution is a process called 'systematic review'. Instead of just mooching around online and picking out your favourite papers to back up your prejudices and help you sell a product, in a systematic review you have an explicit search strategy. [...] You tabulate the characteristics of each study you find, you measure - ideally blind to the results - the methodological quality of each one (to see how much of a 'fair test' it is), you compare alternatives, and then finally you give a critical, weighted summary.”

"Bad Science" by Ben Goldacre (2008)3